Everything’s Broken, Everything’s Too Complicated

Today I took (and thankfully, given everything else that happened, passed) a Microsoft Azure certification exam. Of course I’m under an NDA on the exam, so I won’t talk about the exam itself, but as it happens that’s not what I want to talk about anyway. Instead I want to talk about all the stuff that was required to get to the point of taking the exam – because it was a summary of today’s software environment: everything was broken, everything was too complicated.

The saga of Launching The Exam

Because, as you may have noticed, we’re in the midst of a global pandemic, I chose to take the exam online from the comfort and non-infectiousness of my apartment using a Pearson proctoring service. Essentially, you take some pictures to show your workspace doesn’t have a bunch of cheat sheets posted on the wall, install some software on your computer that turns it into a glorified security camera with a secure browser that won’t let you do anything on the computer besides take the exam, and answer the questions while a proctor watches you remotely. Easy peasy. The power of modern technology working for you.

Or is it? I did some prep work last week, including carefully reading the legal disclosures and instructions and working through all of the recommended tests, and I started checking in fifteen minutes early today – yet I almost missed the exam due to a string of technical problems. Here’s what happened.

I had already run the system check the week prior, which verifies that your computer has a solid Internet connection and can run the exam software and the webcam and microphone work right. Everything checked out. What I didn’t do was log into the Microsoft portal and try starting an exam, because the system check was accessible without going through that portal. And why would I have expected this would be a problem? I had an email right in my inbox with a link to start the exam.

Well, when 7:15 rolled around this morning, I couldn’t log in. I have three separate Microsoft accounts, which of course cannot be merged and all have important data in them. I don’t know how I ended up with three. One of them is connected to my workplace, while the other two are “personal” accounts – but one of the “personal” accounts has the same exact email address as my workplace account, which isn’t confusing at all given that your email address is your username. I couldn’t remember which of them I had signed up for the exam under. When I logged into my workplace-email personal account, I somehow ended up in my other personal account, and neither that account nor my workplace-email work account had the exam listed under “appointments”. I had to go reset my password to get into the other “personal” account, because that password was evidently only in my password manager at work and not in my password manager at home. Then I realized that in order to receive the password-reset email, I had to go find my work laptop, boot it up, connect to the VPN, and launch Outlook, which took about 5 minutes. After resetting my password and logging in successfully, I was presented with an error screen telling me to try clearing my browser cache (which did nothing) or use Microsoft Edge or Internet Explorer (I was taking the test on a Mac). I tried in a different browser, and it still didn’t work. There were now 2 minutes remaining before the time I was supposed to begin check-in. Finally I tried in a third browser – lucky I even had one installed, because in what situation do you need three web browsers on one computer? – and, miraculously and for no logical reason whatsoever, it worked.

So now I’m logged into the site. I now have to have the system text a code to my phone and follow instructions to snap pictures of my workspace (no indication of how wide the perspective should be, or any other instructions, provided) and my government ID. Each picture took about a minute to “upload and verify.” Then I sat for 10 minutes staring at my face in the webcam waiting for the proctor to show up. Then the screen just went black, the computer’s fan went up to maximum speed, and it sat there for about 5 minutes. Finally a “proctor chat” window appeared, followed by an audio call on the computer in which the proctor’s voice was garbled and nearly inaudible. The proctor had to call my cell phone so we could talk while fixing the problem. She told me to “press and hold the power button,” but my MacBook Air doesn’t have a power button; pressing any key turns the machine on. Luckily for me, I happened to remember that depressing and holding the Touch ID sensor powers off the computer (duh, how could you not know that?), and was able to reboot successfully. I was then asked to take the pictures again, but I couldn’t receive the texted link to upload them because the Google Voice app was occupied by my conversation with the proctor, so I had to switch to a browser and manually type in the URL (fortunately that option was available). Finally I was able to get in and start the exam. No explanation, of course, as to why it locked up my computer the first time and worked fine the second time despite my doing nothing different.

Not an exception

So that’s my story of the day…but you’ve heard this story before. As a matter of fact, something not unlike this probably happens to you on a weekly basis. Everything now relies on software – as Marc Andreessen famously said in 2011, “software is eating the world.” And software almost never works right, at least not software complex enough to eat the world.

To fix this particular problem and start the exam, I had to know and understand many items of what should be esoteric trivia at best. Here are twelve, and I’m sure I didn’t get them all:

- The passwords to three different accounts, and how to reset the one I didn’t know because it was in the wrong password manager. I already have to have a whole piece of software to remember my passwords for my software, and even that didn’t work.

- You can have multiple Microsoft accounts.

- You can have two Microsoft accounts under the same username, with different passwords and different contents.

- You have to log in to your Microsoft account – the right Microsoft account – to open the exam program, even though you already have an email sent specifically to you indicating your exam is ready.

- I could try resetting my password to see if that makes Microsoft figure things out when I’m literally getting logged into a different account than the one I typed in.

- Something about a “browser cache”?

- What the heck Microsoft Edge is, and that I can’t use it on this computer anyway. (Have you ever used Microsoft Edge, other than accidentally, or maybe to download a different browser?)

- There are multiple web browsers, I should probably have several that I don’t use installed on my computer to deal with nonsense like this, and the third one will sometimes work where the first two didn’t.

- Pressing down and holding the fingerprint reader turns off the computer. (This might be the dumbest one – leave it to Apple to be too edgy for power buttons. By the way, the “any key turns on the computer” design is also really fun when you need to clean the keyboard, especially during a pandemic. Multiple people have written entire apps that lock the keyboard so you can wipe it down without risking accidentally deleting something – a task the rest of the world accomplishes by, you know, turning the power off. And of course, icing on the cake, several of the keyboard-locking apps you find when you Google this problem don’t actually work.)

- While on the phone on your iPhone, you can go to a different app while continuing the conversation, but only if you put the call on speakerphone first.

- I can’t receive a text message while on the phone, but only because I happen to be using Google Voice on an iPhone, where these two only-vaguely-related functions are mixed into a single app where you can only do one of the things at once.

- I can type the URL into a browser instead of clicking on it in a text message. No instructions on opening an appropriate browser on a phone were provided, of course.

You probably have had to memorize hundreds, if not thousands, of similar pieces of trivia to get through your everyday life. You may not even realize you’re doing it, because it’s come to feel normal. And the moment you need one of the pieces you never happened to learn, you’re in trouble until you find someone else who knows it.

This is not just a frustrating waste of time, it is impractical, unfair, and exclusionary. I’m known for being unusually quick on my technical feet, and dealing with this kind of crap is literally my full-time job as a systems developer and DevOps engineer, and I almost missed the exam. What in tarnation are other people supposed to do? I actually have no idea what other people do. I guess they are just routinely unable to do important things at all, or have to call Microsoft and yell for an hour to get a refund and a rescheduled exam.

Scott Hanselman, a big-name developer at Microsoft, wrote an under-referenced rant in 2012 called Everything’s broken and nobody’s upset. Seriously, how are we all living as if this is acceptable or normal?

Pushing the envelope

Here’s my guess. First of all, recognize that people tend to “push the envelope” – if something becomes possible, they’ll do it. In today’s tech-first political and social climate, if something becomes possible and has a vague air of coolness, it will rapidly not just be done but become required. The problem is that it’s many of the world’s smartest people, often working in unusually smart ways, who are out there pushing the envelope, building these systems. They’re only just able to accomplish it, and that’s them. That leaves everyone else trying to catch up and learn to fix all these stupid, complicated problems so they can live their lives normally in the new system.

Many people can do that, with a great deal of effort, but some outright can’t, or simply don’t want to. Some would say those people are just not “with the times” and they need to work on their skills so they don’t “get behind” in the “knowledge economy.” Leaving behind the basic absurdity of the idea that every person in the world is capable of doing a good job at any single thing at all, much less something as specialized and complicated as fixing computers, nobody seems to take this attitude with other skills. For instance, I don’t know a whole lot about cars. I can drive my car (at an above-average level, of course, because who thinks they’re a below-average driver?). I have a vague mechanical idea of the components of an engine and how a car works, but not enough to do anything useful with that knowledge. With the help of the manual and maybe YouTube, I can top off some fluids or change the windshield wipers or jump-start it if I leave the lights on in the middle of a Minnesota winter, but if it gets more complicated than that I’m going to leave the rest to a professional. What if someone came and told me that, as of 2021, I had to start spending my free time learning how to tear down and rebuild my car’s engine just so I could get to work, and if I didn’t I was limiting my options in life? I would be pretty pissed off, that’s what.

Yeah, sure, being able to work with a computer is a valuable skill, I’m not going to deny that, especially in countries where most of the jobs besides office work, service industries, and retail have gone offshore. This blog exists because I believe in giving people who are looking for them tools to improve their lives through technology, and I believe people are often more capable of learning those tools than they think they are. And sure, using a computer is a more versatile skill than fixing a car. But you can’t claim that fixing a car isn’t a useful and marketable skill as well. There’s not that much difference. To the extent that there is a difference, a large part of that difference is that we’ve designed our world so that people who aren’t highly computer-literate, who don’t want to spend their time fixing their computers, are left in the dust.

How big a problem is this? Or, how many people aren’t computer-literate? If you are reading this post and haven’t researched this topic, I can all but guarantee it’s more than you think. User-interface design expert Jakob Nielsen summarizes the results of a giant lab study of users’ computer skills in his aptly named article The Distribution of Users’ Computer Skills: Worse Than You Think. The study took place from 2011–2015, all over the world, enrolling over 200,000 adults from age 16–65.

A typical task demonstrating “level 3” proficiency (the hardest level of task tested) was, “Find what percentage of emails sent by John Smith last month were about sustainability.” This kind of task may not be something you have to do every day, but you can probably explain right now how you would accomplish it. And this task requires nothing more than an email program, a calculator, a pad of paper, and a bit of persistence to complete successfully (heck, you can take out the pad of paper and the persistence if a search-in-text for the word “sustainability” is sufficient to identify an email as being about sustainability, and the calculator if you’re good at mental math or OK with an estimate). Yet, in the very richest and most computer-literate countries, only 8% of participants could complete this task.

Let me say that again: Between 92% and 95% of working-age adults in rich, industrialized countries worldwide could not perform this task, which likely seems straightforward to most readers of this blog. And that’s exactly the problem. Most of the world’s smartest technologists probably think 50% of people – if not more – can use complicated software or troubleshoot problems with their Microsoft login or their browser cache without screaming in frustration or giving up, so they can make the world more complicated with impunity. Because of the Dunning-Kruger effect, clearing the browser cache seems incredibly straightforward to those who do it all the time, but proficiency requires learning a number of technical terms and procedures and doing it often enough that you remember it’s a thing at all. For developers, it hardly registers when things break all the time and we have to carry out standard or even novel troubleshooting procedures, because we spend all day fixing things we broke anyway, and we’re good at it. Unless, of course, we happen to be trying to take an exam in 15 minutes, or doing something that ought to be simple and has suddenly become maddeningly difficult.

Fixes?

I try never to end an essay presenting a problem without offering some solutions, or at least some suggestions for incremental improvement. In this case, it might be fair to simply link to the Control-Alt-Backspace manifesto and leave it at that, but several more specific points are worth bringing up here.

First, can I point out that authentication sucks? Proving you are who you say you are is an enormously difficult problem, in real life or on the Internet (it’s just worse on the Internet because when several billion Internet users can pretend to be you from anywhere, at any time, trying millions of times a second with different inputs, visually comparing photos on a driver’s license doesn’t cut it). I don’t have any suggestions for fixing this one, but I just want to get this off my chest.

I have over 250 passwords stored in my home password manager, a separate entry for every single application that I use in the entire world. Sometimes a password I am quite certain is right doesn’t log me in. Every website has a different password policy. Sometimes I have to use a different email address too because the site claims my (actually valid) email address containing a plus sign is invalid (big names in the hall of shame: Microsoft, Discover, CenturyLink). Sometimes I fill in a 20-character password and the site only accepts 16 characters, but doesn’t say so until I set the password successfully and try to log in (then I have to use the “forgot password” function to try again). The US Treasury makes me type my password in on a virtual keyboard with the mouse.

I could avoid some of these problems by using simpler, easy-to-crack passwords and a single email address, and then I’d instead get spammed, forget my off-by-one-character-to-satisfy-the-password-policy passwords, and have my data and identity stolen. You can’t win, whatever route you choose. Whoever can safely and securely solve the annoyances of authentication so that we no longer have to waste hours of our time every year logging in to things will be the world’s next billionaire.

And again. This is me. I’m writing about the things I know the most about. I use tools specifically designed to keep my accounts secure and keep track of my logins. Where are the other 95% of people left?

Secondly. Two problems stand at the root of this craziness: software is (1) too complicated and (2) changes too quickly. These may seem to be separate problems at first, but they’re tightly connected, because software that’s too complicated usually has to change frequently. This may be because the software itself is so complicated it is full of bugs, or because it depends on 30 pieces of other software that each can release bugfixes or new features and force updates to the main software, or because the complexity means the authors are willing to toss new features and user-interface “upgrades” in at the drop of a hat.

Let me be clear. I am an Agilist; I believe software that’s released early and often and that plans for change as the only constant is better software. I am not against frequent releases per se. I am against frequent releases of functionality that force users to change while not making the software appreciably better. And I am against the belief that software should always be changing. The best software is software that rarely requires any changes, and certainly not changes that force users to change their behavior, because it already reliably does everything its users need it to do. When changes are required, or it’s possible to add new, broadly useful functionality without disrupting existing users, then the developers respond and release those changes quickly. This ideal is only achievable with simple software.

Let’s take the Unix family of operating systems. There have been and continue to be many versions of Unix (colloquially Unices or *nixes) – System V, Plan 9, AIX, Solaris, Linux, BSD, Android, and so forth – but they all share a common design and heritage. Since we’re talking about software eating the world, Unix has definitely eaten the world. Your MacBook, your iPhone, your Android phone, your smart thermostat, most of the servers that run the Internet and send you web pages, your ATM, and countless other devices wouldn’t turn on without Unix-family operating systems. Even Windows – the flagship product of a company whose CEO infamously called Linux a “cancer” in 2001 – now has support for an embedded Linux environment. Almost no computing device nowadays is free from the influence of Unix, and it’s not unreasonable to imagine that if Unix disappeared from every computer tomorrow, civilization would collapse.

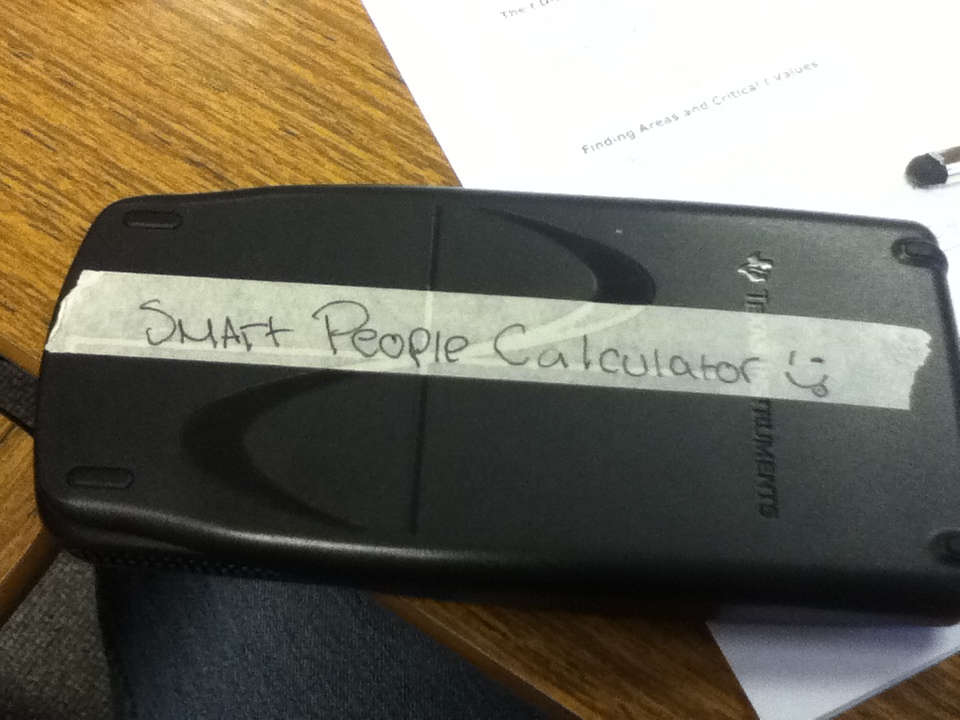

Yet despite its ubiquity as the underlying substructure of other systems, Unix (usually in the Linux flavor) used directly on a typical desktop computer for everyday work continues to be considered a “smart people operating system,” like this graphing calculator the girl next to me in a high-school class I once took had:

It’s not hard to understand

either why the hacker-types who spend their days and nights

in front of a computer

and who make all the decisions about what tools will run your thermostat and phone

love it,

or why everyone else thinks it’s not for them.

Unix is irregular, idiosyncratic, complicated,

and deeply dangerous if misused

(accidentally wrecking the system

is almost a rite of passage for new Linux users,

and a quickly dashed off sudo rm -rf /*

will permanently delete all your files).

Where there are any safeguards at all,

you can easily remove them and shoot yourself in the foot.

Unix is full of frustrating limitations you have to work around.

It relies on users doing “extra work” to put together simple pieces

to form their own workflows.

When you look at this command I used this weekend to sort a table of data, what else is there to say other than Unix is hard to learn and reserved for people who are excellent at and enjoy learning rapidly changing, complex skills?

awk 'NR<2{print; next}{print $0 | "sort -t, -k2,2 -k1,1"}' status.csv | column -s, -t

Yet there’s something crucial that Unix gets right that almost nobody else does, a crucial component of Unix’s smashing success and one that I think should recommend Unix to a broader audience – and if not Unix itself, at the very least its principles. It’s this: Unix is rock solid and interoperable and fundamentally doesn’t change. The Unix of today is more or less the Unix of the early 1980s. Sure, my 2020 Arch Linux distribution has a lot more software on it, and all the basic tools have more options. I have Internet connectivity, and I can install software in seconds that would have taken the old-timers days to order on tape, compile, and configure (or more likely, write from scratch). Some system configuration files are in new locations, or contain new ways of naming things. But if someone from 1980 time-traveled to 2020 and sat down in front of the command prompt at my Linux computer, they would still find it familiar, and if I sat with them for an hour or two to teach them the things that have changed, chances are they would easily be able to do real work on it.

That awk command up above?

I could have written that in the 80s,

and it would still work today.

Even better, the way things are going,

chances are good it will still work in 2060.

(The Lindy effect

says that non-perishable things like technologies or books age in reverse –

that is, the longer they survive,

the longer their expected future lifespan.

To be more precise,

their average future life expectancy is about equal to their current lifespan.)

To put this another way,

if you’re starting out your career today and you learn awk,

there’s a solid chance you can still be using awk when you retire,

with some minor new features you can ignore if you don’t need them.

Is there any other computer system you use that you can say this about?

A few other systems, like COBOL or DOS batch files,

are still around and still work,

but most of them are considered dead

and kept around largely to keep old software running.

The Unix command line is very much alive and actively in use for new development.

When things change this slowly, the new features – and the occasional simple, powerful new program to deal with a problem that really didn’t exist or wasn’t adequately addressed before – become useful new tools in your computer-user’s toolbox, not an extra burden to learn. I get the feeling that most people don’t hate software primarily because it’s complicated, hard to learn, and requires memorizing idiosyncratic pieces of trivia, but rather because the moment you finally figure out how to do something, it changes. (One is reminded of Douglas Adams, in The Restaurant at the End of the Universe: “There is a theory which states that if ever anyone discovers exactly what the Universe is for and why it is here, it will instantly disappear and be replaced by something even more bizarre and inexplicable. There is another theory which states that this has already happened.”) Unix almost never breaks backwards compatibility: if a command works now, there had better be a darn good reason for it to stop working in a future version. Sure, Unix is harder to learn up front than, say, Gmail – maybe a lot harder – but once you master it, you’re done. You can use a computer and make it work for you. Full stop.

The world would be vastly better if we applied the Unix design principles to more software: make it only as complex as absolutely required, sacrifice even correctness for the sake of simplicity, make each small program to do one thing well, design small programs to integrate with each other. Instead we go for massive, complex, expensive, and broken – and nobody cares.

I’ve previously written about Unix and the Unix philosophy in Dreamdir and the Unix Philosophy, or Likable Software and Just Get Started. Also related is A Complete Definition of Badness, which explores the reasons we favor shiny features and get stuff that’s bad and doesn’t work.